The Five Illusions of Responsible AI in Agentic Systems

Breaking the wroongs

Introduction

In my ebook Responsible AI in Practice: From Intake to Incident, I introduced the concept of the Five Illusions — the assumptions that quietly derail responsible AI programmes before they even get started.

Those illusions were written with a broad enterprise AI lens. Models behind APIs. Copilots assisting knowledge workers. AI features embedded in products.

But something has shifted.

We’ve moved from AI that advises to AI that acts.

Agentic systems — autonomous agents, multi-agent orchestrations, declarative agents in tools like Microsoft Copilot Studio — don’t wait for a human to copy-paste a suggestion into an email.

They send the email. They trigger the workflow. They escalate the incident.

That shift from suggestion to action changes the stakes of every illusion.

In this post, I want to revisit each of the five illusions through the lens of agentic AI and share what I’ve learned from building enterprise agents in the real world.

Illusion 1: “We Have a Policy, So We’re Covered”

The original illusion: Many organisations believe that publishing a responsible AI policy — a PDF on the intranet, a set of principles on the website — means the work is done.

The illusion is that policy equals practice.

How agents make it worse:

When AI was advisory, a gap between policy and practice meant a human might ignore a guideline. Annoying, but recoverable.

When an agent autonomously executes a decision — say, triaging an employee crisis request or routing a customer complaint — that gap becomes a gap between intention and outcome.

The agent doesn’t know your policy exists unless it’s been encoded into its behaviour.

What to do instead:

For agentic systems, your responsible AI policy must be executable.

In Copilot Studio, this means encoding guardrails directly into:

- The agent’s system prompt

- Its topic design

- Its knowledge source boundaries

When I built the CS Lite crisis management agent at Sulava, the response structure itself — a strict four-part format — was a policy decision made executable. The agent couldn’t deviate because the architecture wouldn’t let it, not because someone wrote a guideline saying “please be consistent.”

If a guardrail isn’t in the architecture, it doesn’t exist.

Illusion 2: “The Model Is the Risk”

The original illusion: Teams focus their entire risk assessment on the foundation model — its bias, its hallucinations, its training data.

They treat the model as the single point of failure.

How agents make it worse:

In agentic systems, the model is just one node in a chain.

Consider a multi-agent orchestration:

- An anomaly detection agent flags an issue

- A root cause analyser interprets it

- An action recommender proposes a fix

- An orchestrator decides which recommendation to execute

The risk isn’t in any single model. It’s in the handoffs, the aggregation logic, and the decision to act.

I’ve seen this firsthand when building multi-agent demos with Copilot Studio and Azure AI Foundry. The individual agents can each behave responsibly in isolation. But the orchestrator that stitches them together introduces emergent behaviour that no single agent’s guardrails can catch.

An anomaly agent might flag a low-confidence signal. The root cause agent might interpret it generously. The recommender might escalate it.

Suddenly you have an aggressive automated response to a non-issue — and no single agent was “wrong.”

What to do instead:

Map your risk surface across the entire agent chain, not just the model layer.

- Every handoff between agents is a potential failure point

- Log what each agent contributed

- Track confidence levels passed forward

- Record what the orchestrator decided

Responsible AI for agents is as much about architecture as it is about alignment.

Illusion 3: “Human-in-the-Loop Solves Everything”

The original illusion: When in doubt, add a human reviewer. This is the safety net teams reach for when they can’t fully trust the AI’s output.

How agents make it worse:

The entire point of agentic AI is to reduce human bottlenecks.

If every agent action requires human approval, you haven’t built an agent — you’ve built a suggestion engine with extra steps.

But if you remove the human entirely, you’ve traded a bottleneck for a blindspot.

The real danger is what I call the “approval fatigue” pattern:

- Teams start with human-in-the-loop for every action

- The volume becomes unmanageable

- Reviewers start rubber-stamping

- The human-in-the-loop becomes a human-in-the-loop in name only

The governance box is ticked, but nobody is actually governing.

What to do instead:

Design for selective human involvement based on risk tiers.

Not every agent action carries the same weight.

- A crisis agent providing general safety information? Low risk — let it run.

- The same agent escalating a mental health concern to HR? High risk — require human confirmation.

In the CS Lite agent, we handled this by designing specific escalation paths: routine guidance runs autonomously, but anything involving named contacts or sensitive scenarios triggers a handoff protocol.

The architecture decides when humans are needed — not a blanket policy.

Illusion 4: “Testing Before Launch Is Enough”

The original illusion: Teams invest heavily in pre-deployment testing — red teaming, bias audits, accuracy benchmarks — and then consider the system validated.

Ship it and move on.

How agents make it worse:

Agents operate in dynamic environments. They interact with live data, real users, and changing contexts.

An agent that passed every test in staging can behave unpredictably in production because the world changed, not the agent.

Here’s a concrete example:

While working with Azure AI Foundry’s content filtering, I encountered a false positive where the openAIIndirectAttack filter flagged a perfectly legitimate aviation compliance PDF as a prompt injection attempt.

No amount of pre-launch testing would have caught this because the specific document didn’t exist in the test environment.

The agent was blocked from doing its job by a safety system that was technically working as designed.

What to do instead:

Shift from “test then deploy” to continuous monitoring.

- Build feedback loops that capture agent behaviour in production

- Track not just errors, but near-misses

- Log topic triggers, knowledge source hits, and fallback rates

- Establish a regular cadence for reviewing agent telemetry

Responsible AI for agents is an ongoing practice, not a pre-launch checklist.

Illusion 5: “Responsible AI Is the AI Team’s Job”

The original illusion: Organisations treat responsible AI as a technical discipline owned by the data science or AI team.

Ethics boards exist in isolation. Business stakeholders aren’t involved.

How agents make it worse:

Agentic systems cross organisational boundaries in ways that traditional AI doesn’t.

- A crisis management agent touches HR, legal, employee wellbeing, and IT operations

- A sales orchestration agent spans marketing, customer success, and revenue operations

If responsible AI is siloed in the AI team, nobody is asking whether the business process the agent automates should be automated at all.

The multi-tenant architecture challenge makes this even more visible. When building tenant-configurable agents — where different business units or clients can customise the agent’s prompts and behaviour — responsible AI governance can’t live in a single team.

Each tenant’s configuration is a new risk surface.

What to do instead:

Embed responsible AI accountability at the business process level, not just the technology level.

Every agent should have a named process owner — not a technical owner, but someone who understands the business context and can answer:

- Should this action be automated?

- What happens when it goes wrong?

- Who is accountable for the outcome?

In a multi-tenant setup, each tenant configuration should go through its own lightweight review.

Responsible AI governance should scale with the system, not sit above it.

Moving from Illusion to Practice

The common thread across all five illusions is this:

Agentic AI collapses the distance between decision and action.

When AI only advised, there was always a buffer — a human who could catch a mistake, question a recommendation, or simply close the tab.

Agents remove that buffer by design.

That’s not inherently dangerous. It’s powerful. But it demands a different kind of rigour.

A Practical Checklist

If you’re building enterprise agents today — whether in Copilot Studio, Azure AI Foundry, or custom code — here’s a starting point:

- ✅ Make policies executable — If a guardrail isn’t in the architecture, it doesn’t exist

- ✅ Map the full agent chain — Risk lives in handoffs, not just models

- ✅ Design selective human oversight — Tier your agent actions by risk, not by blanket policy

- ✅ Monitor continuously — Pre-launch testing is necessary but never sufficient

- ✅ Distribute accountability — Every agent needs a business process owner, not just a technical one

Closing Thought

The Five Illusions don’t disappear when you move to agentic AI. They deepen.

The good news is that once you see them clearly, you can build systems that are not just autonomous — but genuinely trustworthy.

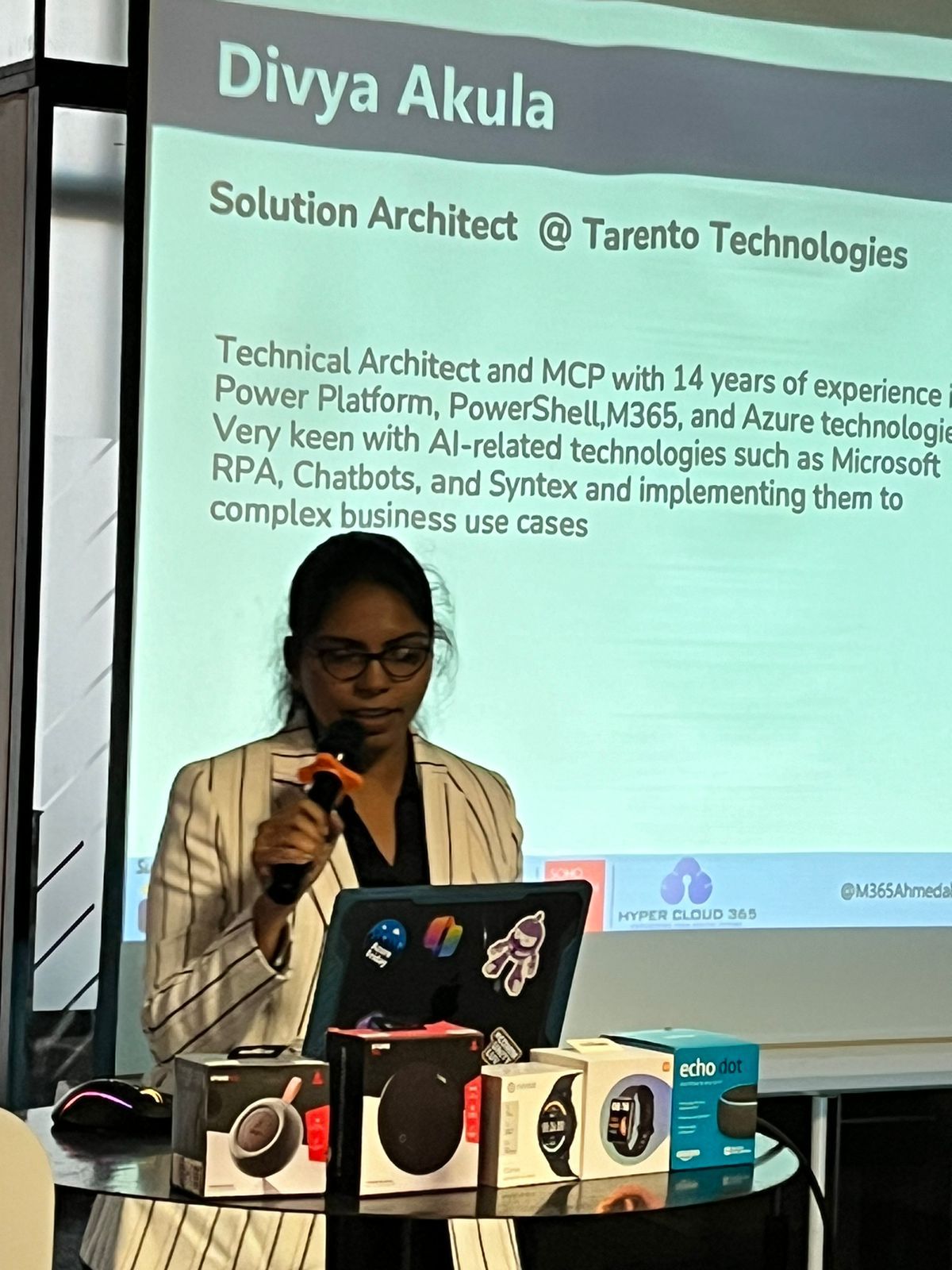

This post extends ideas from my free ebook, Responsible AI in Practice: From Intake to Incident, available on GitHub under CC BY-NC-ND 4.0. If you’re building enterprise agents and want to talk responsible AI architecture, find me on LinkedIn or at divyaakula.com.

Subscribe via RSS

Comments